AI Hallucination Cases Database – Damien Charlotin

alive

HTTP 200

Last checked: 2026-03-02T21:38

Description

# Description

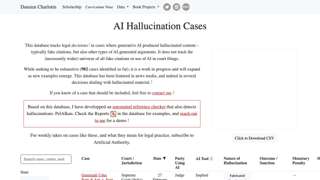

This database tracks legal cases worldwide where generative AI produced hallucinated content—primarily fake citations but also fabricated arguments and false quotes—that were submitted in court filings and identified by judges or tribunals. Currently containing 982 documented cases across multiple jurisdictions, it serves as a resource for understanding the legal consequences of AI hallucinations in judicial proceedings.