Vite + React + TS

alive

HTTP 200

Last checked: 2026-03-02T21:38

Description

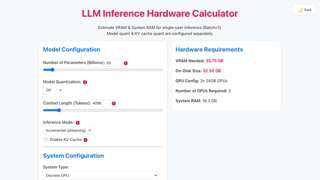

The LLM Inference Hardware Calculator is a tool that helps users determine the appropriate hardware specifications and infrastructure requirements needed to run large language models (LLMs) for inference tasks. Users input details about their model and inference needs, and the calculator provides recommendations for the necessary computational resources, memory, and other hardware considerations.